AWS Enables Cross-Account Athena Queries in Quick

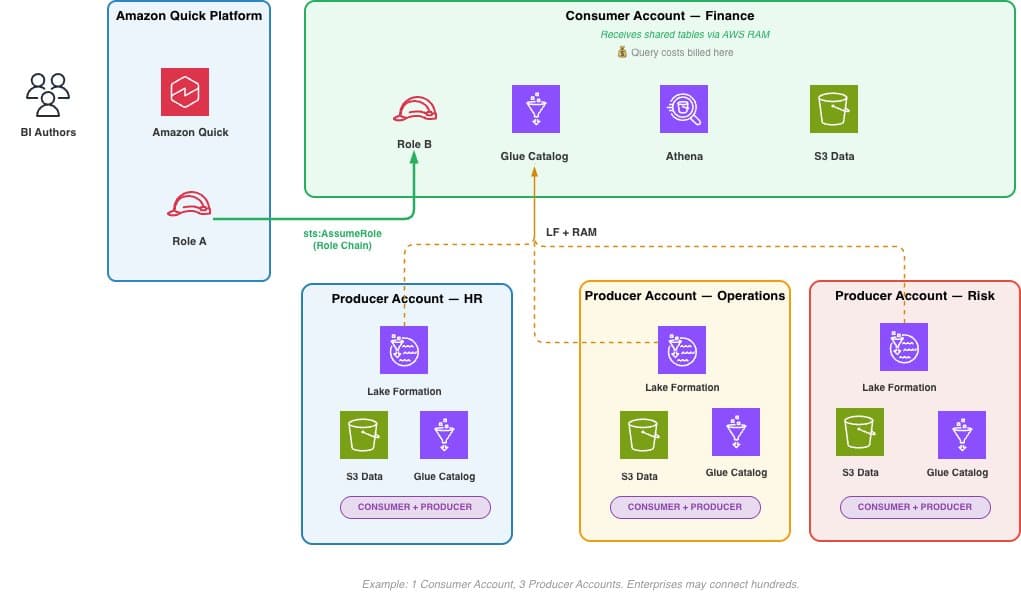

AWS announced cross-account Athena access for Amazon Quick, enabling organizations to query data across multiple AWS accounts without duplicating subscriptions or centralizing costs. The feature uses IAM role chaining to allow Quick deployments in a central account to securely access Athena data in other accounts, with query costs billed to the account where data resides. This addresses a common enterprise pattern where business units maintain separate AWS accounts but need unified analytics through a centralized Quick deployment.

TL;DR

- →AWS launched cross-account Athena access for Amazon Quick, allowing queries across multiple AWS accounts via IAM role chaining

- →Query costs are billed to the account where data resides, eliminating the need to absorb all costs in the central account

- →The feature enables secure data access without sharing long-term credentials across account boundaries

- →Enterprises can now maintain a single Quick deployment while querying data distributed across business unit accounts

Why it matters

This capability addresses a structural challenge in enterprise AI deployments where data governance and cost allocation require account separation, but analytics and insights require unified access. By enabling secure cross-account queries with proper cost attribution, AWS removes a friction point that previously forced organizations to choose between centralized analytics and distributed cost management.

Business relevance

For large enterprises with decentralized data ownership, this reduces operational overhead and cost complexity. Teams can now use a single Quick instance to analyze data across retail banking, investment banking, risk management, and other business units without managing multiple subscriptions or absorbing costs in a central budget.

Key implications

- →Organizations can implement hub-and-spoke analytics architectures where Quick runs centrally but data remains in business unit accounts, improving cost transparency and accountability

- →IAM role chaining becomes a standard pattern for cross-account AI workloads, potentially influencing how other AWS services handle multi-account access

- →Financial services and other regulated industries gain a compliance-friendly way to maintain data isolation while enabling unified analytics

What to watch

Monitor adoption patterns to see whether enterprises consolidate analytics infrastructure around Quick or continue fragmenting across multiple BI tools. Watch for similar cross-account access features in competing cloud analytics platforms, and track whether role chaining becomes a standard pattern for other AWS AI services beyond Athena.

vff Briefing

Weekly signal. No noise. Built for founders, operators, and AI-curious professionals.

No spam. Unsubscribe any time.