AWS Launches Native Claude Platform Access

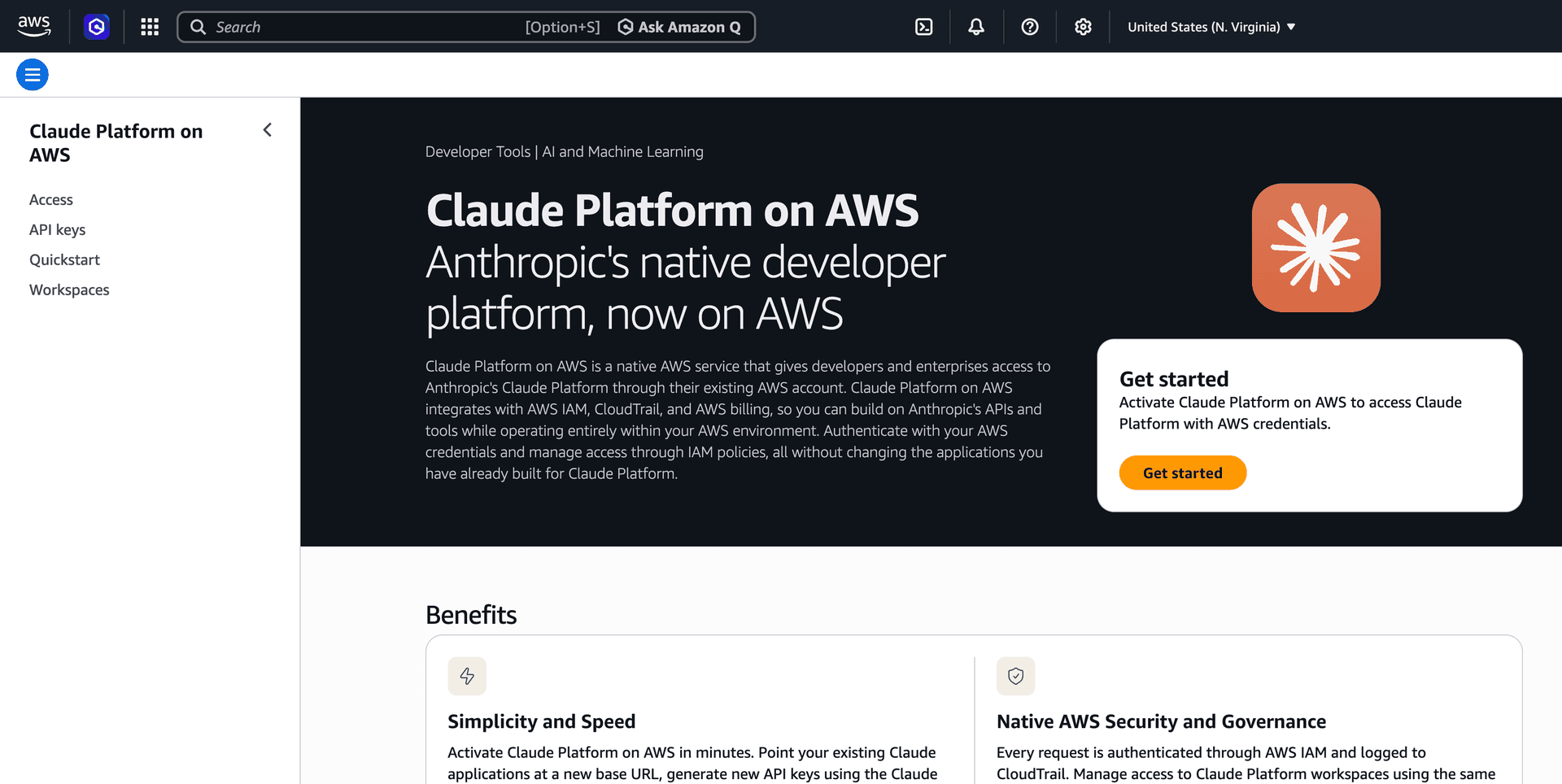

Anthropic and AWS announced general availability of Claude Platform on AWS, a service that provides direct access to Anthropic's native Claude Platform through AWS accounts without requiring separate credentials, contracts, or billing relationships. The offering includes the Messages API, Claude Managed Agents (beta), advisor and web search tools, web fetch capabilities, and MCP connector support, all delivered through the same console experience available directly from Anthropic. AWS becomes the first cloud provider to offer native Claude Platform access, integrating Anthropic's full feature set into the AWS ecosystem.

TL;DR

- →Claude Platform on AWS is now generally available, giving customers native Anthropic platform access through their AWS account

- →No separate credentials, contracts, or billing relationships required; integrated directly into AWS console and IAM

- →Includes full feature parity with Anthropic's direct offering: Messages API, Claude Managed Agents (beta), advisor tool (beta), web search, web fetch, and MCP connector

- →AWS is the first cloud provider to offer native Claude Platform experience rather than access through Bedrock or similar abstraction layers

Why it matters

This move signals a deepening partnership between Anthropic and AWS that could reshape how enterprises access Claude models. By offering native platform access rather than a managed service abstraction, AWS is positioning itself as the preferred cloud home for Anthropic's full feature set, potentially influencing where organizations build Claude-powered applications. The unified billing and credential model reduces operational friction for AWS customers already embedded in the ecosystem.

Business relevance

For operators and founders, this reduces friction in adopting Claude at scale within AWS environments. Unified billing, IAM integration, and no separate vendor relationships simplify procurement and compliance workflows. Organizations already committed to AWS can now access Anthropic's latest features and agent capabilities without managing parallel platforms or credentials, lowering the operational cost of multi-model strategies.

Key implications

- →AWS gains a direct distribution channel for Anthropic's platform capabilities, potentially shifting market share from other cloud providers offering Claude access through abstraction layers

- →Enterprises with AWS-first strategies now have a clearer path to adopt Claude's full feature set without vendor fragmentation, which could accelerate Claude adoption in large organizations

- →The inclusion of beta features like Claude Managed Agents and advisor tool suggests AWS customers will have early access to Anthropic's latest capabilities, creating a competitive advantage for AWS-native builders

- →This model could set a precedent for how other AI labs distribute their platforms through cloud providers, moving away from managed service abstractions toward native platform access

What to watch

Monitor whether other cloud providers (Azure, Google Cloud) respond with similar native platform offerings from competing labs like OpenAI or Google DeepMind. Watch for adoption metrics among AWS customers and whether this accelerates Claude's market penetration in enterprise segments. Track whether the unified billing and IAM model becomes table stakes for cloud-based AI platform access.

Related Video

vff Briefing

Weekly signal. No noise. Built for founders, operators, and AI-curious professionals.

No spam. Unsubscribe any time.