Google Embeds Affirm, Klarna in Gemini Shopping

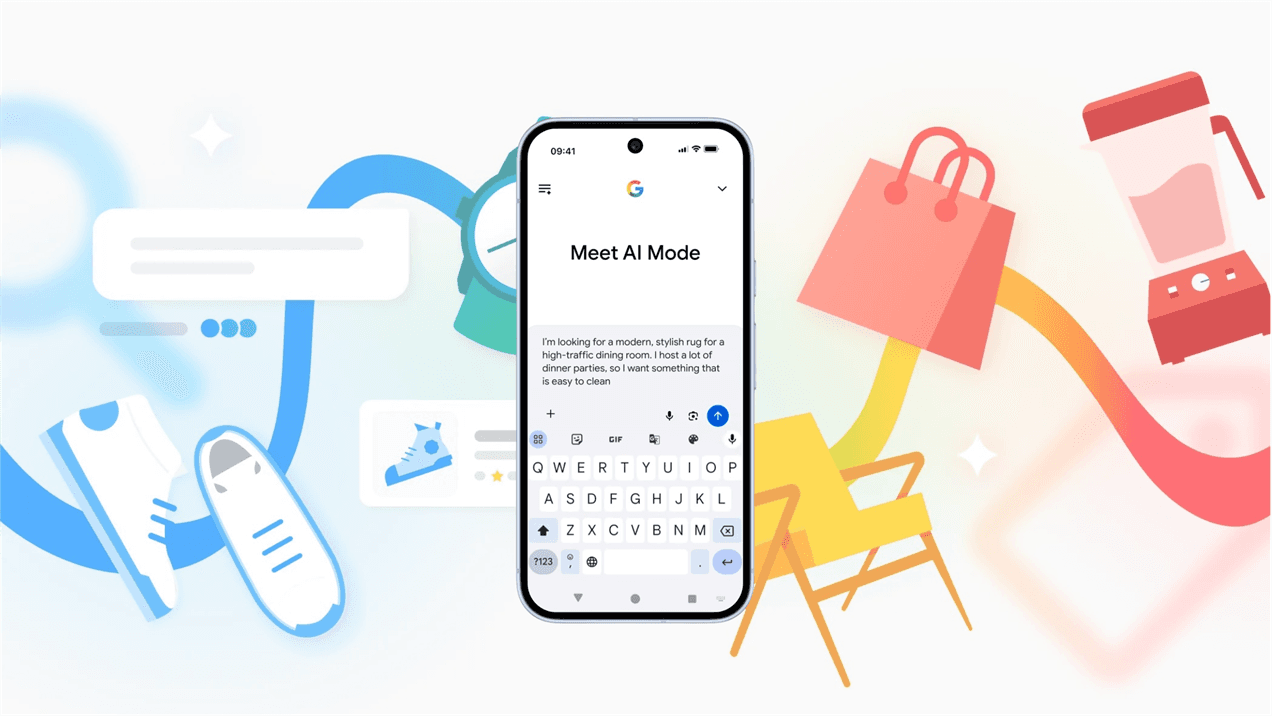

Google is integrating buy now, pay later services Affirm and Klarna into its Gemini app and AI search mode, expanding both fintechs' existing partnerships with the search giant. The move enables users to split purchases across multiple payments directly within Google's AI shopping interfaces. This represents a deepening of Google's commerce capabilities within its generative AI products and signals growing integration between BNPL providers and AI-driven shopping workflows.

TL;DR

- →Google adds Affirm and Klarna as payment options in Gemini app and AI search mode

- →Expansion of existing relationships between the fintechs and Google

- →Enables buy now, pay later functionality directly in AI shopping transactions

- →Reflects broader trend of embedding financial services into AI commerce interfaces

Why it matters

This integration tightens the loop between AI-powered product discovery and frictionless checkout, making impulse purchases easier within Google's AI ecosystems. As generative AI becomes a primary shopping interface for consumers, embedding financing options directly into these flows could shift how purchase decisions are made and financed, with implications for consumer spending patterns and BNPL adoption rates.

Business relevance

For operators building commerce features into AI products, this signals that payment flexibility is becoming table stakes in AI shopping experiences. For Affirm and Klarna, distribution through Google's high-traffic AI surfaces offers a major channel to reach consumers at the moment of purchase intent, potentially driving significant volume growth without requiring their own consumer acquisition.

Key implications

- →BNPL services are becoming embedded infrastructure in AI shopping rather than standalone products, shifting from consumer-facing to platform-integrated models

- →Google deepens its commerce moat by offering end-to-end shopping experiences within Gemini, from discovery through payment

- →Consumers may face increased friction-free spending opportunities, raising questions about financial health and debt accumulation patterns

What to watch

Monitor whether other BNPL providers or alternative payment methods (credit cards, digital wallets, crypto) seek similar integrations with Google and competitors like OpenAI or Amazon. Track transaction volumes and average order values through these new payment channels to assess whether AI shopping interfaces materially change consumer spending behavior compared to traditional e-commerce.

vff Briefing

Weekly signal. No noise. Built for founders, operators, and AI-curious professionals.

No spam. Unsubscribe any time.