AWS Adds Native Payment Rails to Agent Platform

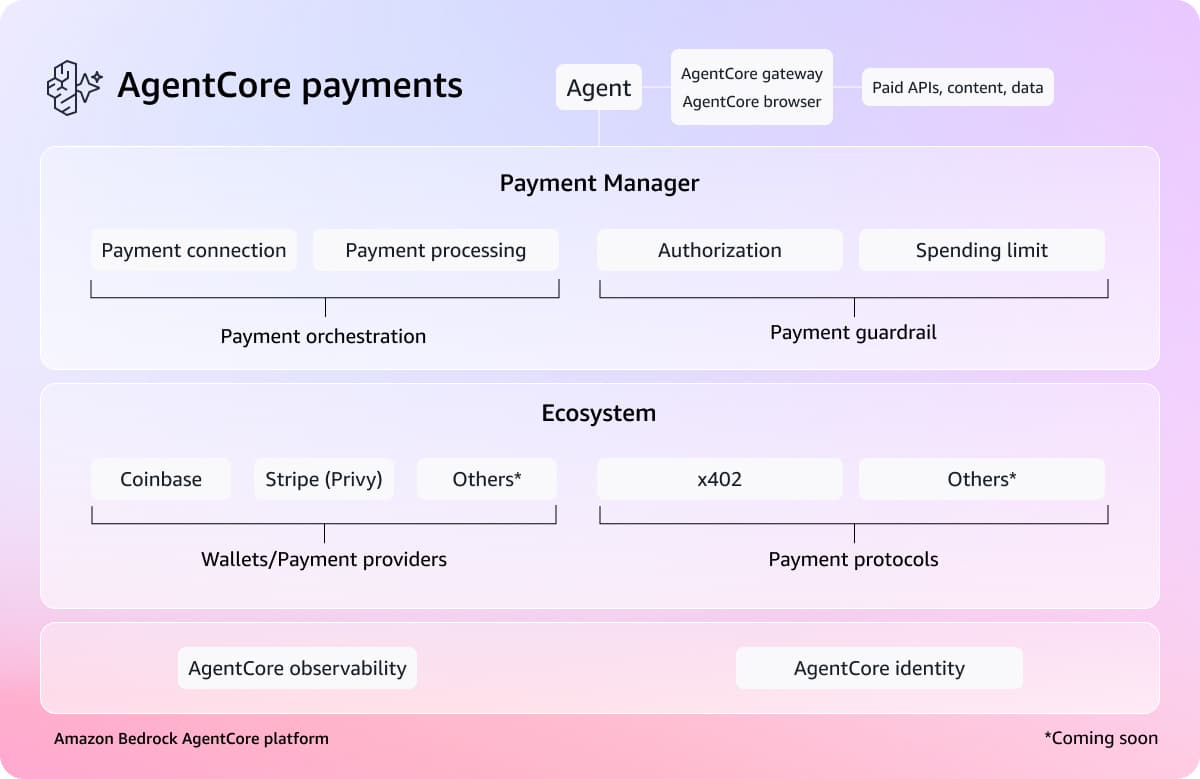

Amazon Web Services announced Amazon Bedrock AgentCore Payments in preview, a managed payment system that enables AI agents to autonomously access and pay for resources including APIs, web content, MCP servers, and other agents. Built in partnership with Coinbase and Stripe, the feature integrates payment capabilities directly into the AgentCore platform rather than as a separate module, allowing developers to set spending limits and governance controls without manually wiring billing relationships to each service provider. The announcement reflects a broader shift toward an agentic economy where agents discover, evaluate, and transact for resources in real time, though the infrastructure to support this at scale remains nascent.

TL;DR

- →AWS launched AgentCore Payments, enabling agents to autonomously pay for APIs, data feeds, MCP servers, and other resources within a single execution loop

- →The feature is built natively into AgentCore with Coinbase and Stripe providing wallet and payment infrastructure, eliminating manual per-provider billing setup

- →Developers can set spending limits, enforce governance, and monitor transactions through the same identity and observability systems already in AgentCore

- →Early use cases include financial research agents accessing paywalled data, coding agents calling specialized APIs, and future commercial transactions like flight and hotel bookings

Why it matters

This addresses a critical infrastructure gap in the emerging agentic economy. As agents move beyond task execution to autonomous resource acquisition, the ability to handle micropayments and real-time billing at scale becomes essential. AWS is positioning itself as a foundational platform for this shift by integrating payment rails directly into its agent infrastructure, potentially establishing a standard for how agents will transact in the future.

Business relevance

For enterprises building agents, this reduces engineering overhead by eliminating months of custom billing integration work and credential management across fragmented service providers. It also creates a new revenue model for API and content providers who can now be discovered and paid by agents dynamically, opening a market for specialized, agent-accessible services priced in fractions of a cent per transaction.

Key implications

- →Payment infrastructure is becoming a core platform feature rather than a bolted-on service, signaling that autonomous agent transactions are moving from experimental to production-ready

- →The partnership with Coinbase and Stripe suggests major payment processors are betting on agent-driven commerce as a significant future revenue stream

- →Agents will increasingly operate as economic actors with their own spending authority, requiring new governance, compliance, and fraud prevention frameworks at the infrastructure level

- →This could accelerate the adoption of micropayment protocols and enable new business models where specialized agents and APIs are priced and consumed on-demand

What to watch

Monitor how quickly developers adopt these payment capabilities and what types of resources agents begin accessing through paid channels. Watch for emerging standards around agent spending governance and compliance, particularly around fraud prevention and user authorization. Also track whether other cloud providers and payment networks launch competing agent payment platforms, which would signal consolidation around this infrastructure layer.

vff Briefing

Weekly signal. No noise. Built for founders, operators, and AI-curious professionals.

No spam. Unsubscribe any time.