Mapping Causal Reasoning in LLMs with Sparse Concept Graphs

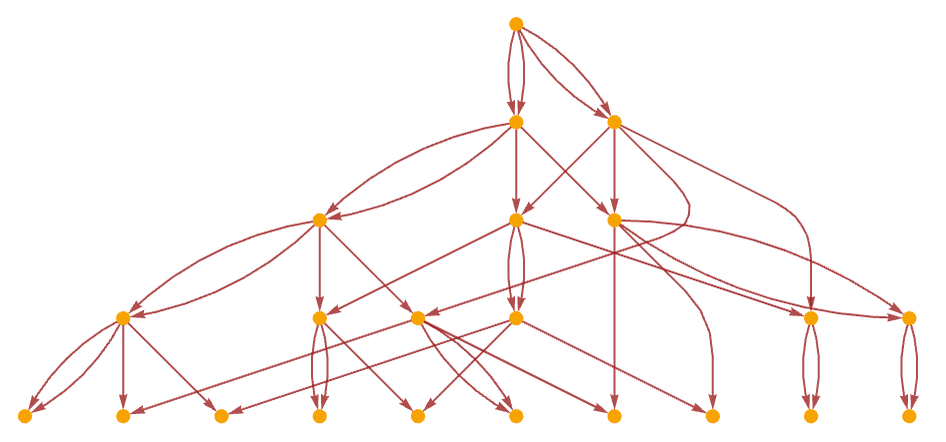

Researchers propose Causal Concept Graphs (CCG), a method that maps how concepts interact during multi-step reasoning in language models by combining sparse autoencoders with differentiable graph learning. The approach identifies sparse, interpretable latent features and learns directed causal dependencies between them, then validates these connections using a new Causal Fidelity Score metric. On three reasoning benchmarks with GPT-2 Medium, CCG significantly outperforms existing tracing and ranking methods, producing sparse, domain-specific graphs that remain stable across runs.

TL;DR

- →Causal Concept Graphs combine sparse autoencoders with DAGMA-style structure learning to map causal dependencies between concepts in LLM latent space

- →New Causal Fidelity Score metric measures whether graph-guided interventions produce larger downstream effects than random ones, validating graph quality

- →On ARC-Challenge, StrategyQA, and LogiQA benchmarks, CCG achieves CFS of 5.654, significantly outperforming ROME-style tracing (3.382) and SAE-only ranking (2.479)

- →Learned graphs are sparse (5-6% edge density), domain-specific, and stable across seeds, suggesting genuine causal structure rather than noise

Why it matters

Understanding how concepts causally interact during reasoning is fundamental to interpretability and control of language models. Current methods either localize individual concepts or trace information flow, but don't capture the causal structure of multi-step reasoning. This work bridges that gap with a principled approach that could enable more targeted interventions and safer model behavior.

Business relevance

For organizations deploying LLMs in high-stakes domains, interpretable causal reasoning maps could improve debugging, reduce hallucinations, and enable more precise fine-tuning. The ability to validate which concept interactions actually drive outputs has direct applications in model auditing, safety testing, and building more reliable AI systems.

Key implications

- →Sparse autoencoders alone are insufficient for understanding reasoning, and causal structure learning is necessary to capture how concepts interact during inference

- →Differentiable graph learning methods can recover meaningful causal dependencies in neural networks, opening new avenues for mechanistic interpretability research

- →Domain-specific graph structures suggest that reasoning patterns are learnable and stable, potentially enabling transfer of causal insights across related tasks

What to watch

Monitor whether CCG scales to larger models (GPT-3, GPT-4 scale) and whether learned causal graphs transfer across different tasks or model architectures. Also track whether this approach enables practical interventions that improve model robustness or reduce specific failure modes in real applications.

vff Briefing

Weekly signal. No noise. Built for founders, operators, and AI-curious professionals.

No spam. Unsubscribe any time.