Scaling Multi-Anchor Embeddings to LLMs with 40x Compression

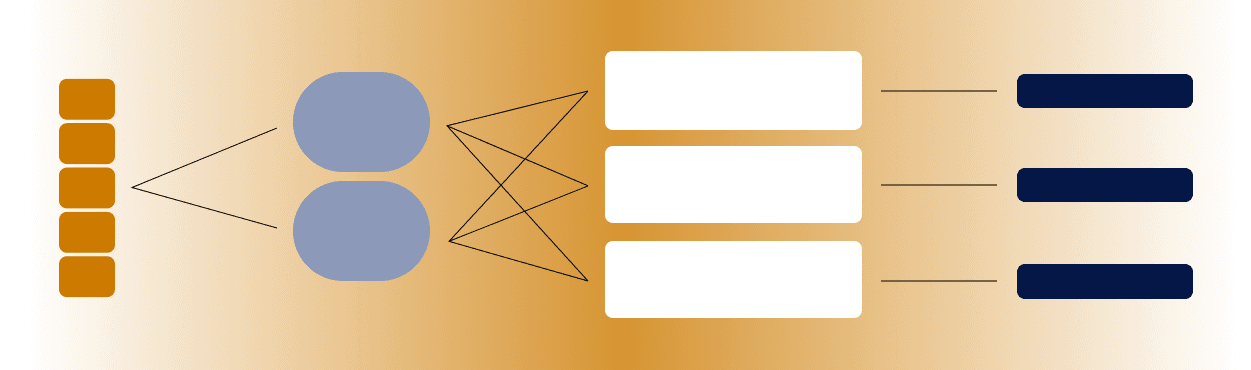

Researchers introduce Adaptive Dictionary Embeddings (ADE), a framework that scales multi-anchor word representations to large language models by replacing traditional single-vector embeddings with multiple context-aware vectors per word. The approach uses three key techniques: Vocabulary Projection to optimize anchor lookup, Grouped Positional Encoding to preserve semantic coherence, and context-aware reweighting via self-attention. On text classification benchmarks, ADE achieves comparable or better performance than DeBERTa-v3-base while using 98.7% fewer trainable parameters and compressing the embedding layer over 40x.

TL;DR

- →ADE scales multi-anchor representations, which assign multiple vectors to each word, to transformer-scale models for the first time by solving computational inefficiency problems

- →Vocabulary Projection converts a costly two-stage lookup into a single matrix operation, making the approach practical at scale

- →Grouped Positional Encoding allows anchors of the same word to share positional information while maintaining semantic coherence and anchor-level variation

- →On DBpedia-14, ADE outperforms DeBERTa-v3-base (98.06% vs. 97.80%) with 40x embedding compression and 98.7% fewer parameters

Why it matters

Word embeddings are foundational to NLP, but single-vector representations create bottlenecks for polysemous words and limit semantic expressiveness. This work demonstrates that multi-anchor representations, which have shown theoretical promise but remained impractical at scale, can now compete with or exceed state-of-the-art dense models while dramatically reducing parameter count. The result suggests a viable path toward more parameter-efficient language models without sacrificing performance.

Business relevance

For operators and founders building language models or deploying them at scale, parameter efficiency directly impacts inference cost, latency, and memory footprint. ADE's 40x embedding compression and 98.7% parameter reduction while maintaining or improving accuracy offers a concrete optimization lever for production systems, particularly relevant for edge deployment and cost-sensitive inference scenarios.

Key implications

- →Multi-anchor representations may offer a practical alternative to scaling model width, enabling better performance-per-parameter tradeoffs in production systems

- →The techniques (Vocabulary Projection, Grouped Positional Encoding, context-aware reweighting) are modular and could be integrated into existing transformer architectures without full redesign

- →Embedding layer compression at 40x suggests significant untapped efficiency gains in the non-attention components of transformers, which may shift optimization focus away from attention mechanisms alone

What to watch

Monitor whether ADE generalizes beyond text classification to generation tasks, larger models, and other domains where single-vector bottlenecks are acute. Watch for adoption in production systems and whether the parameter savings translate to real-world latency and cost improvements. Also track whether similar multi-anchor principles are applied to other model components beyond embeddings.

vff Briefing

Weekly signal. No noise. Built for founders, operators, and AI-curious professionals.

No spam. Unsubscribe any time.